Insert rows

Inserts rows of data into a SQL Server database using a DataReader or a DataTable.

Use this action when you need to insert a large number of rows into a table.

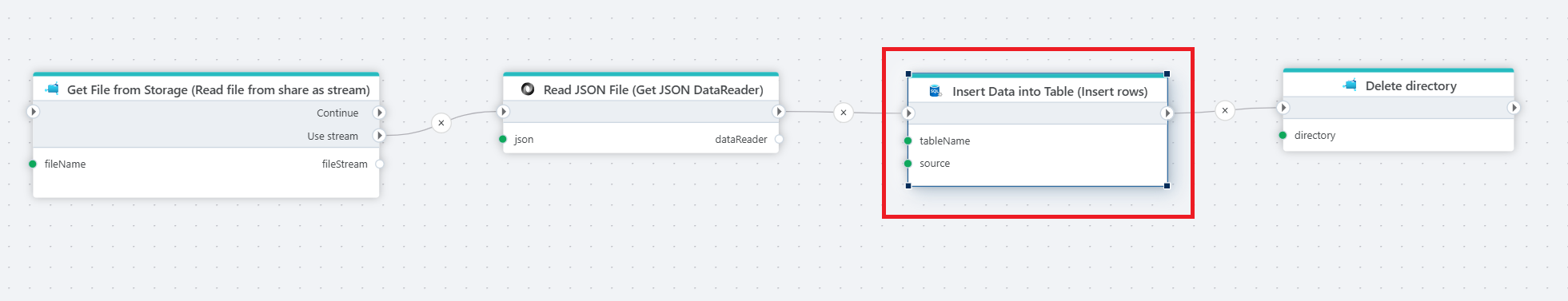

Example  The example above takes a file from storage, reads its JSON content, saves the data to a database, and then deletes the storage folder to keep things tidy. Actions used: 1. Read file from share as a stream 2. Get JSON DataReader 3. Insert rows 4. Delete directory.

The example above takes a file from storage, reads its JSON content, saves the data to a database, and then deletes the storage folder to keep things tidy. Actions used: 1. Read file from share as a stream 2. Get JSON DataReader 3. Insert rows 4. Delete directory.

Properties

| Name | Required | Description |

|---|---|---|

| Title | No | A descriptive title for the action. |

| Connection | Yes | The SQL Server Connection. |

| Dynamic connection | No | Use this option to select a connection created by the Create Connection action. |

| Source | Yes | The data to insert. This can be a DataReader or a DataTable. |

| Destination table | Yes | Select or enter the name of the table to insert data into. |

| Batch size | No | The number of rows inserted per batch. The default is 5000. The batch size can affect performance, and the optimal setting may vary. |

| Result variable name | No | The name of the variable that will contain the number of rows inserted. |

| Command timeout (sec) | No | The time limit for command execution before timing out. The default is 120 seconds. |

| Description | No | Additional notes or comments about the action or configuration. |

Target table schema

The target table must have a schema (columns and data types) that matches the schema of the Source DataReader or DataTable.

The columns do not need to be in the same order, but they must match by name and data type.

Note

If you get the error: "The locale id '...' of the source column '...' and the locale id '...' of the destination column '...' do not match.", it means the collation is different between the source and target columns (or the default set per database). To avoid this, either

- use a DataTable instead of a DataReader as the Source.

- or convert the COLLATION to match the target when using [SQL Server Get DataReader]. For example:

SELECT ..., CONVERT(varchar(...), [column name] COLLATE target_collation_name) AS [column name], ...

SQL Server: Videos / Getting started

This section contains videos to help you get started quickly working with Azure SQL / SQL Server using Flow.

Dump CSV file from Azure Blob container to Azure SQL table

This video demonstrates how to import all records from a CSV file into an Azure SQL table.

In the demo, no data import options (such as data type conversion, number or date formatting) are specified, meaning the data is imported as raw text.