Chat Completion

This defines an Microsoft Foundry chat completion model that processes a prompt, understands the user’s intent, and generates the next response. Using chat completion provides structured reasoning, allows the model to follow context, and helps maintain a coherent dialogue.

This action is typically used in flows where you need the model’s complete output in a single, finalized response instead of receiving partial tokens over time. Unlike the streaming version, this action delivers the complete output in one response.

Example

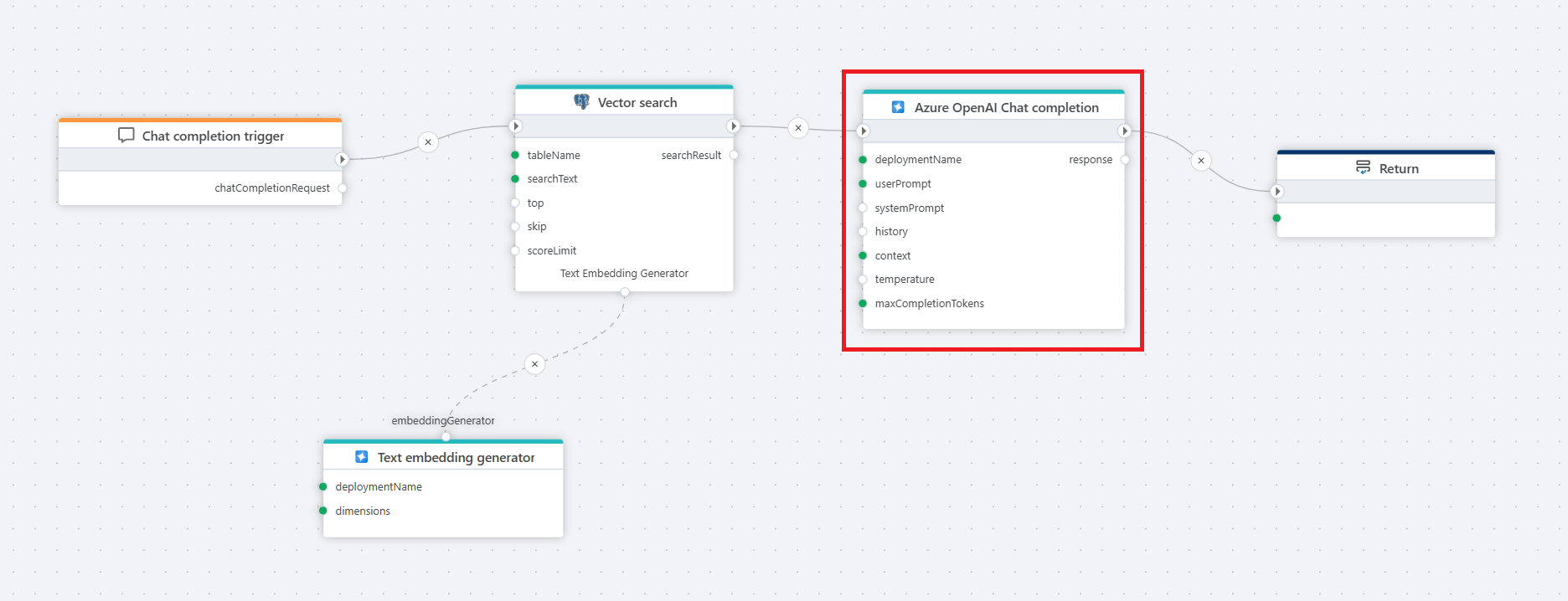

This flow processes a user's chat question by first receiving it through a Chat completion trigger, then converting it into a vector using a text embedder, performing a Vector search in a PostgreSQL database for relevant context, and finally passing the user input and retrieved context to an Azure AI Chat completion action, which generates a response that is returned to the client via the Return node.

Properties

| Name | Required | Description |

|---|---|---|

| Title | No | The title of the action. |

| Connection | Yes | Defines the connection to Microsoft Foundry resource. |

| Enable dynamic connection | No | A Dynamic Connection will override the connection on flow execution. |

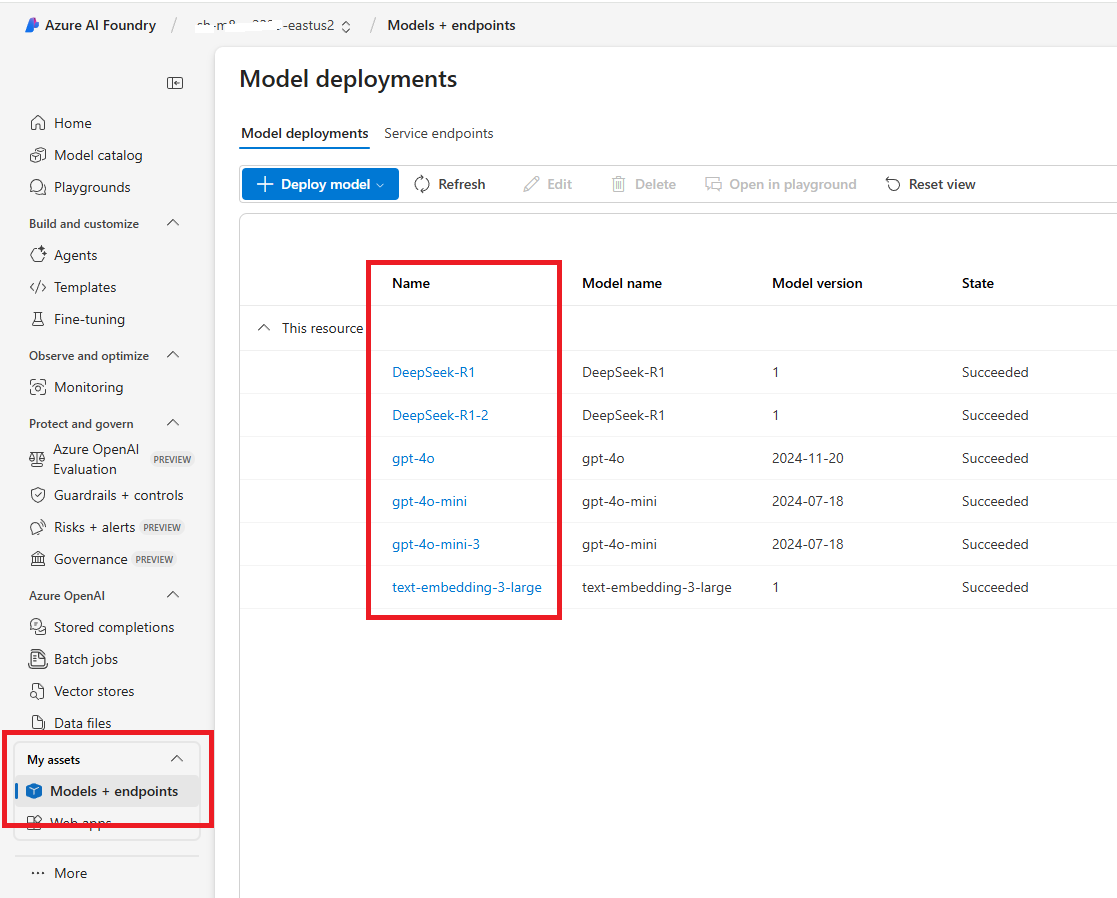

| Model | Yes | Specifies the model deployment name, which corresponds to the custom name chosen during model deployment in the Azure portal or in Microsoft Foundry (see below). In the Azure Portal, the deployment name can be found under Resource Management > Model Deployments. |

| User Prompt | Yes | The input message from the user, which the model processes to generate a response. |

| System Prompt | No | A system-level instruction that guides the model’s behavior and response style. |

| History | No | A record of past interactions that provides context to the conversation, helping the model maintain continuity. |

| Context | No | Typically used for RAG, and provides additional information or domain-specific knowledge to the chat model so it can make more accurate responses. The input can be a string (text) or a vector search result, such as the result from the PostgreSQL Vector Search action. |

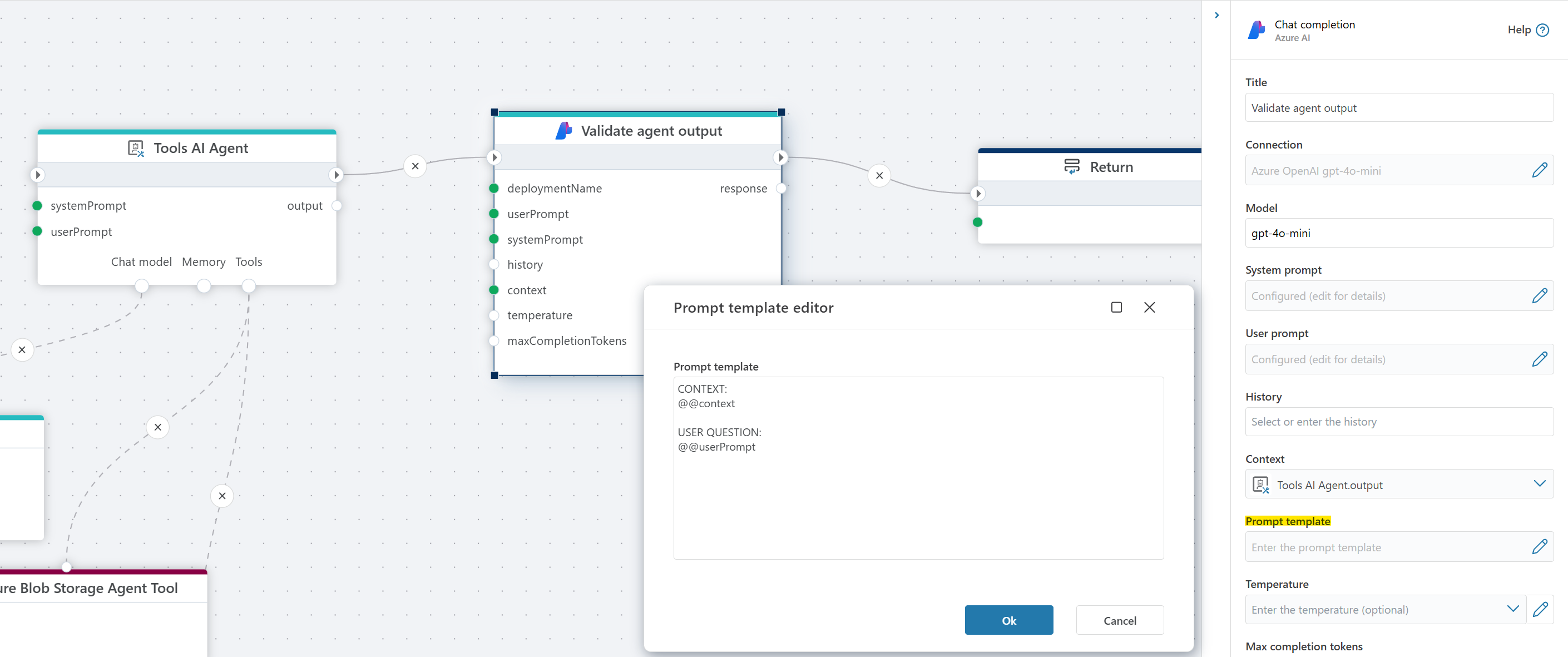

| Prompt template | No | Defines the structure of the prompt sent to the model. The system replaces the placeholders @@context and @@userPrompt with the relevant information. See example below. |

| Temperature | No | Temperature in models controls the randomness and creativity of the generated responses. Lower temperatures (e.g., 0.2) produce more focused, predictable text, ideal for tasks that require precision. Higher temperatures (e.g., 1.5) increase creativity and variability, but may risk generating less coherent or relevant content, making it important to adjust based on your desired outcome. The default is 0.7 if nothing is defined by the user. |

| Max Completion Tokens | No | Sets a limit on the number of tokens (words, characters, or pieces of text) in the model’s response. |

| Result Variable Name | No | Stores the generated AI response. Default: "response". |

| Enable Grounding | No | Enables grounding to improve factual accuracy using external or structured context. |

| Disabled | No | If enabled, the action is skipped during flow execution. |

| Description | No | Additional details or notes regarding the chat completion setup. |

Returns

The action returns a single AIChatCompletionResponse object containing the generated message, token usage details, finish reason, and the raw Azure response.

Models + Endpoints

To find the Model deployment name, look in Models screen in Microsoft Foundry.

Prompt template

The prompt template allows you to specify the format of the prompt that is sent to the language model. This is useful for customizing how context and instructions are provided to the model. Within the template, you can use the following placeholders:

@@context: This is replaced by the "Context" property value.@@userPrompt: This is replaced by the "User prompt" property value.

The system will substitute these placeholders with the corresponding values before sending the prompt to the model.

Example

Azure AI: Videos / Getting started

This section contains videos to help you get started quickly with Azure AI in your Flow automations.

Create an AI chat solution using InVision and Flow

This video shows how to build an AI chat solution using Hypergene InVision and Flow. It includes how to populate a vector database, and use the vector database in a RAG-based chat completion flow to answer user questions from PDF product sheets.